For some context: Recently I’ve been sharpening my Kubernetes skills by setting up a small 6 node k3s cluster at home. The place I currently live doesn’t have a public IP address, so I chose to set up a Cloudflare Tunnel to expose services to the internet.

I chose to have the cloudflared daemon running on the host machines and the overall setup quick and pain-free. The whole thing seemed to work well, but I noticed that over time (within hours) the tunneled

services would start responding more slowly and eventually Cloudflare would display 523 errors.

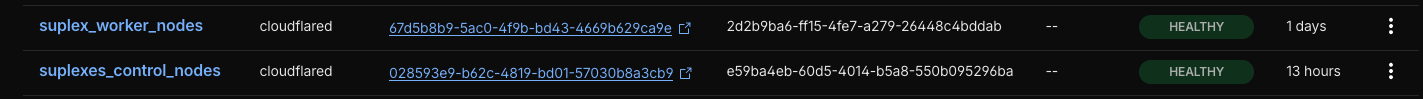

The cloudflare dashboard was showing the tunnels as healthy and the services were working correctly when accessed from LAN (over their .internal domains).

The cloudflared logs were showing some errors that didn’t suggest any obvious solutions:

$ journalctl -u cloudflared | grep 21:05

Feb 23 21:05:13 suplex6 cloudflared[3473875]: 2026-02-23T20:05:13Z ERR failed to accept incoming stream requests error="failed to accept QUIC stream: timeout: no recent network activity" connIndex=3 event=0 ip=198.41.200.13

Feb 23 21:05:13 suplex6 cloudflared[3473875]: 2026-02-23T20:05:13Z ERR failed to run the datagram handler error="context canceled" connIndex=3 event=0 ip=198.41.200.13

Feb 23 21:05:13 suplex6 cloudflared[3473875]: 2026-02-23T20:05:13Z WRN failed to serve tunnel connection error="accept stream listener encountered a failure while serving" connIndex=3 event=0 ip=198.41.200.13

Feb 23 21:05:13 suplex6 cloudflared[3473875]: 2026-02-23T20:05:13Z WRN Serve tunnel error error="accept stream listener encountered a failure while serving" connIndex=3 event=0 ip=198.41.200.13

Feb 23 21:05:13 suplex6 cloudflared[3473875]: 2026-02-23T20:05:13Z INF Retrying connection in up to 1s connIndex=3 event=0 ip=198.41.200.13

Feb 23 21:05:15 suplex6 cloudflared[3473875]: 2026-02-23T20:05:15Z ERR failed to accept incoming stream requests error="failed to accept QUIC stream: timeout: no recent network activity" connIndex=0 event=0 ip=198.41.200.73

Doing the lazy thing and setting up some staggered cloudflared service restarts in cron didn’t really do the trick (and wasn’t really a solution). Some googling led me to this github issue and this reddit thread, which got me on the right track.

The tunnels by default try to use the QUIC protocol to talk to the Cloudflare edge, falling back to HTTP/2 based on some obscure criteria. QUIC is UDP-based, which can make it harder to troubleshoot, especially when you don’t have control of all of the network infrastructure involved. Switching the tunnels to use HTTP/2 seemed like a good thing to try.

A small gotcha is that it’s not a setting you can change in the Cloudflare dashboard (even though it might seem like it could be, in the Speed -> Settings -> Protocol Optimization on the domain dashboard page) - you gotta use the cloudflared setting.

I was already using Ansible to manage all of the cluster machines, so the change was pretty small - I had to make sure the cloudflared tunnel invocation of the cloudflared.service used the --protocol http2 flag. Here’s the (gist of) the ansible task file:

- name: Create /usr/share/keyrings directory

file:

path: /usr/share/keyrings

state: directory

mode: '0755'

become: true

- name: Add Cloudflare GPG key

ansible.builtin.get_url:

url: https://pkg.cloudflare.com/cloudflare-public-v2.gpg

dest: /usr/share/keyrings/cloudflare-public-v2.gpg

mode: '0644'

become: true

- name: Add Cloudflare repository into sources list

ansible.builtin.apt_repository:

repo: 'deb [signed-by=/usr/share/keyrings/cloudflare-public-v2.gpg] https://pkg.cloudflare.com/cloudflared any main'

filename: cloudflared

state: present

become: true

- name: Install Cloudflared

become: true

apt:

update_cache: true

state: latest

name:

- cloudflared

- name: Populate service facts

ansible.builtin.service_facts:

no_log: true

- name: install the cloudflared service

ansible.builtin.command:

argv:

- /usr/local/bin/cloudflared

- service

- install

- ""

when: ansible_facts.services['cloudflared.service'] is not defined

no_log: true

become: true

## START OF THE FIX

- name: ensure cloudflared uses http2

ansible.builtin.replace:

path: /etc/systemd/system/cloudflared.service

regexp: 'tunnel run'

replace: 'tunnel --protocol http2 run'

after: '\[Service\]'

become: true

register: cloudlflared_protocol_replace

- name: conditionally reload the systemd daemon if the cloudflared service file was modified

ansible.builtin.systemd:

daemon_reload: true

when: cloudlflared_protocol_replace.changed

become: true

## END OF THE FIX

- name: insantly restart cloudflared service

ansible.builtin.systemd:

name: cloudflared.service

state: restarted

become: true

A cleaner solution would probably be to maintain a config file, but it was pretty late at night and this used the fewest moving parts to get the job done.

[edit]: Alternatively you can set the env var TUNNEL_TRANSPORT_PROTOCOL to http2 when starting cloudflared - that’s the route I went with on another one of my servers, where the

daemon is run inside a docker container based on a pre-made image.

And like that, the problem went away - the communication with the services behind the tunnels have been quick and solid in the last day (knock on wood), and another heisenbug got squashed.

The whole story isn’t super exciting, I just didn’t want to be the “nvm, I solved it myself!” guy on the internet :D